Folding context

Context windows, compression, and "folding the dough"

Here’s a funny move I find myself making lately:

Encounter a complex programming challenge

Get three papers related to my problem

Open three terminal windows, have separate Claudes read each paper

Ask some questions about each paper

Save a summary of each conversation to an .md file.

/clear

Have Claude read all three summaries. Generate a synthesis with a comparisons table.

Save to synthesis.md.

/clear

Include @synthesis.md and brainstorm with Claude

A solution appears

This works great for learning speed-runs, too.

Go to plato.stanford.edu and get pages for three different philosophers (Plato, Kant, Nietzsche, say)

Open three terminal windows, have separate Claudes read each page

Ask some questions about each philosopher

Save a summary of each conversation to an .md file.

/clear

Have Claude read all three summaries, synthesize, compare and contrast.

Save to synthesis.md.

/clear

Include @synthesis.md and have a conversation

(Claude Code is an excellent tool for thought, by the way.)

I think of this move as folding context, because it feels like folding dough when baking. The results I get from the AI are much, much better when I fold the context.

Why is folding context so effective? Well, it’s almost like we’re doing Deep Research by hand. Each Claude instance is functioning as a research agent. Critically, these are research agents that we are guiding manually. In each thread, we’re asking questions that draw out the dimensions of context that are most meaningful to our larger question. It’s a kind of smart compression, and compression = intelligence, or close enough.

Context folding also creates useful byproducts. These little markdown files are valuable compressed insights that you can include in future conversations to infuse them with deep contextual background. Our team has started checking them into the codebase under the docs directory.

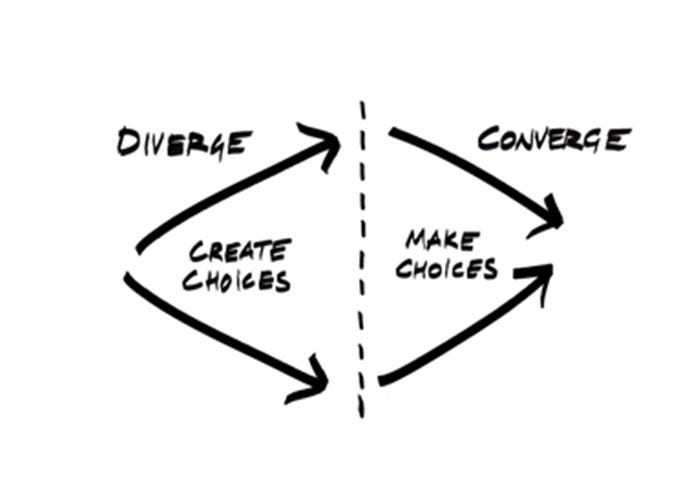

Context folding is one example of a more general pattern for provoking effective behavior from LLMs. When we fold context, we diverge, exploring separate paths in different context windows. After exploring the solution space, we converge, comparing what we have learned, and synthesizing everything together.

Claude Code’s Plan mode is another example of this pattern. First Claude diverges, exploring the codebase with sub-agents, then Claude converges, writing up a plan that synthesizes the findings.

Designers call this process the diamond model. When using the diamond model, we often do two passes, one for research, one for development. Most AI tools today do just one pass (Plan mode). Are there gains to be had in doing more than one pass?

Cyberneticist Horst Rittel situates the diamond model within a larger theory framework. He says design is “the generation of variety and the reduction of variety.”

Variety here means cybernetic variety, as in the set of all possible behaviors that a system can generate.

Ashby’s Law of Requisite Variety: The variety of a regulator must be at least as large as that of the system it regulates.

Ashby’s Law tells us that a solution must be at least as complex as the problem. If it isn’t, the problem spills over, routes around the solution, becomes an externality. This is useful information. It tells us that to solve a problem the LLM has to be at least as large as the problem.

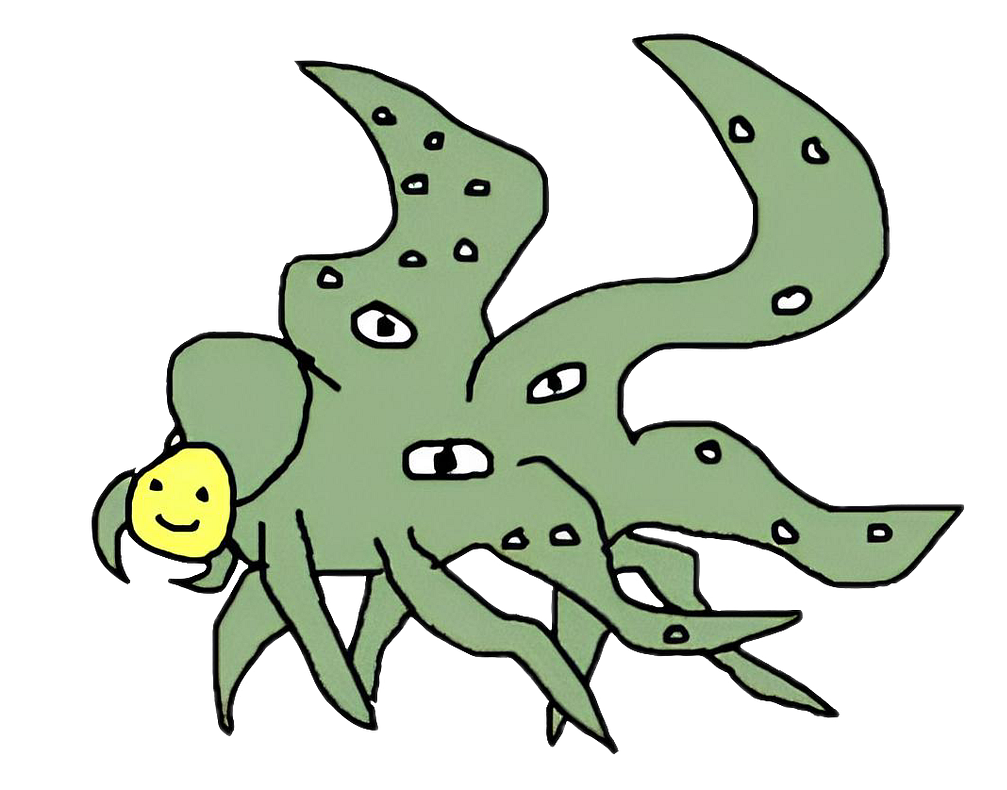

And LLMs do have a lot of cybernetic variety. An internet’s worth. This extreme variety manifests when you run a raw base model. What you get back is inhuman, the rantings of someone channeling hyperdimensional cosmic horrors. A base model’s variety is way beyond the range of normal human behavior.

So, LLMs have a lot of variety. Too much variety to fit within the tiny reference frame of human meanings. The solution? Slap a smiley face on top. RLHF reduces the variety of LLMs to meaningful ranges.

But RLHF reduces variety almost too much. The model comes away with a strong tendency to seek toward oatmeal. It has lost the requisite variety to surprise.

But being in control means defining the range of what will be considered, that is, the range of the possible. In effect, when I am in control I restrict the world to what I can imagine or permit…

(Ranulph Glanville, 2019, “Design Cybernetics”)

We can bring back some of this variety through the context window. LLMs are highly steerable via the context window, and through prompting, you can sort of pluck at the latent dimensions of the model, drawing out distinct behaviors.

This is why putting an LLM in a loop with tools makes it so much smarter. Tools add contextual variety to the context window, which educes latent variety from the model. The conversation between model and outside context generates meaningful behavior that has the variety to surprise.

OpenClaw is an extreme example here. When you give a claw full access to your computer, it imports the variety of a high-level Turing machine into the agentic loop. That’s a lot of variety, potentially unbounded. Some of that variety is deeply surprising, like when OpenClaw’s creator discovered it could figure out how to do voice transcription on its own. Some of that variety is negatively surprising, like when you ask your claw to tidy up your inbox, and it deletes all of your emails. Either way, a claw with full bash access has more than enough variety to surprise.

The great benefit of not having enough variety to control a system is that, if I give up trying to control, I can discover many possibilities I would have excluded if I had insisted on being in control. These possibilities are unexpected, outside my frame of reference, in a word, novel.

(Ranulph Glanville, 2019, “Design Cybernetics”)

From the standpoint of requisite variety, folding context makes a lot of sense. We’re letting our agents’ understandings diverge, specialize, and spin off in unexpected directions. The difference in their understandings, the contradiction, is alpha, valuable variety.

Do I contradict myself?

Very well then I contradict myself,

(I am large, I contain multitudes.)

Questions I’m asking myself:

Where does the variety live? In the agent? In me? In the loop between the agent and me?

Who has requisite variety? Who is steering the system? The agent? Me? Neither? Do we have requisite variety together?

Can I increase variety by spinning up agents in divergent roles? Busytown is my current attempt at this, a multi-agent swarm coordinating over SQLite.

Is the variety manifesting within meaningful parts of the possibility space? What is meaningful to me? Over the short term? Over the long term? Can I craft agentic systems that manifest deeper meaning over time?

Erich Fromm said, creativity requires the courage to let go of certainties. Is my notion of meaning constraining me to oatmeal? Do I need to increase the variety of my agentic systems? Do I need to lose some control? Where can I embrace threats to meaning, to generate creative breakthroughs?